Authors: Sridharan, Srinivas; Tsai, Louie; Kalamkar, Dhiraj D; Shiryaev, Mikhail; Durnov, Dmitry

Introduction

The modern deep learning models are growing at an exponential rate, and those latest models could grow their parameters from million to billions. To train those modern models within hours, distributed training is a better option for those big models.

In this article, we distributed the DLRM model by using PyTorch with different backends, and shed light on the performance benefit of oneCCL backend.

Intel® oneAPI Collective Communications Library

The Intel® oneAPI Collective Communications Library (oneCCL) enables developers and researchers to more quickly train newer and deeper models. This is done by using optimized communication patterns to distribute model training across multiple nodes.

The main features of Intel® collective communication library includes:

- Built on top of lower-level communication middleware. MPI and libfabrics transparently support many interconnects, such as Intel® Omni-Path Architecture, InfiniBand*, and Ethernet.

- Optimized for high performance on Intel® CPUs and GPUs.

- Allows the tradeoff of compute for communication performance to drive scalability of communication patterns.

- Enables efficient implementations of collectives that are heavily used for neural network training, including all-gather, all-reduce, and reduce-scatter.

Intel oneCCL is implemented according to oneAPI specifications to promote compatibility and enable developer productivity, and the detailed oneCCL specification is also published under oneAPI specifications.

For the PyTorch users, Intel also introduces torch-ccl as the bindings maintained by Intel for the Intel® oneAPI Collective Communications Library (oneCCL).

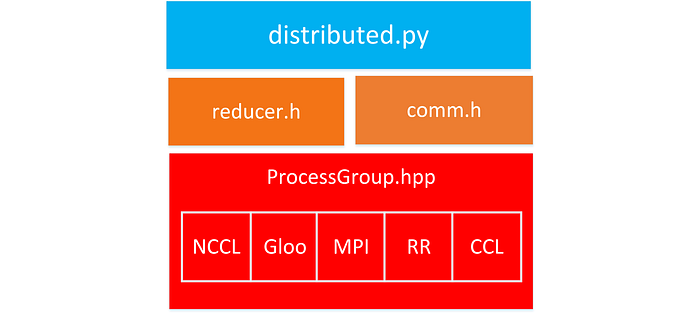

The torch-ccl module implements PyTorch C10D ProcessGroup API and can be dynamically loaded as external ProcessGroup, and users can switch PyTorch communication backend from built-in ones to CCL. Here are related software stacks for PyTorch DistributedDataParallel, and CCL is one of communication backend options along with NCCL, Gloo, MPI and RR.

DLRM : a new era of deep learning workloads from Facebook

Over the last two years, a lot of research has been published that addresses the fusion of artificial intelligence (AI) and high-performance computing (HPC). While the presented research is focusing on the extreme-scale and HPC aspects, it is often limited to training convolutional neural nets (CNN).

In contrast to this, the most common use of AI today is not in CNNs. CNNs are only contributing a single digit or very low double-digit percentage to the workload mix in modern data centers[4].

Thus, we believe, the HPC research community needs to shift its focus away from CNN models to the models which have the highest percentage in the relevant application mix: 1) recommender systems (RecSys) and 2) language models, e.g. recurrent neural networks/long short-term memory (RNN/LSTM), and attention/transformer.

Facebook recently proposed a deep learning recommendation model (DLRM) [2]. Its purpose is to allow hardware vendors and cloud service providers to study different system configurations. DLRM comprises of the following major components:

a) a sparse embedding realized by tables (databases) of various sizes (Emb. Lookup 0-S in Fig. 2)

b) small dense multi-layer perceptron (MLP) layers. (Bottom MLP in Fig. 2)

c) a larger and deeper MLP (Top MLP in Fig. 2)

Both a) and b) interact and are fed into c).

This work unveils that DLRMs mark the start of a new era of deep learning workloads. This is due to the sparse model portion as well as the dense model portion, and of course the communication and interaction between these two. While the sparse portion challenges the memory capacity and bandwidth side, and the dense portion the compute capabilities of the system, the interaction stresses the interconnect.

In short, DLRM training performance needs a balanced design between memory capacity, memory bandwidth, interconnect bandwidth and compute/floating point performance.

Multi-Socket and Multi-Nodes DLRM

The original DLRM code from Facebook has support for device-based hybrid parallelization strategies. It follows data parallelism for MLP and model-parallelism for embeddings. This means that MLP layers are replicated on each agent and the input to the first MLP layer is distributed over minibatch dimension whereas embedding tables are distributed across available agents and each table produces output worth full minibatch.

This leads to mismatch in minibatch size at interaction operation and requires communication to align minibatch of embedding output with bottom MLP output. The multi device (e.g. socket, GPU, accelerator,etc.) implementation of DLRM uses all-to-all communication to distribute embedding output over minibatch before entering into interaction operation. DLRM performance analysis and optimization from oneCCL for PyTorch

Intel has analyzed distributed DLRM performance and optimized it on PyTorch[1]. Below sections will cover related performance analysis from those works.

Multi-Socket / Multi-node DLRM results and related performance benefit from oneCCL

For multi sockets performance numbers, we measured the performance with a 64 sockets cluster in 32 dual-socket nodes with Intel Xeon Platinum 8280 processor. Each socket has 28 CPU cores.

We implemented a classic pruned fat-tree: 16 nodes with 32 sockets are connected to one OPA switch each, and then both leaf OPA switches are connected with 16 links to the root OPA switch. Storage and head nodes are also connected to the root OPA switch.

For the multi node setup, we assign one MPI process per socket, dedicated 24 cores per socket for the backpropagation GEMMs whereas we created 4 CCL workers per socket for the communication for the cases of using CCL Backend. Those 4 CCL workers are pinned on the first 4 CPU cores for CPU affinity.

In order to evaluate different pressure points of our hardware systems we will use three different configurations “small, large and MLPerf” of DLRM.

- The Small variant is identical to the model problem used in DLRM’s release paper [2].

- Large variant is the small problem scaled in every aspect for scale-out runs and is the best representative for production workloads in terms of actual compute and memory capacity requirements.

- The MLPerf configuration is recently proposed as a benchmark config for performance evaluation of a recommendation system training [3].

For comparing the scaling performance among those patterns, we measured both strong-scaling speed-up and weak scaling efficiency in Figure 4 and Figure 5.

We achieve up to 8.5× end-to-end speed up for the MLPerf config when running on 26 sockets (65% efficiency) and about 5× to 6× speed up when increasing the number of sockets by 8× for the small and large configs (62.5%-75% efficiency).

To understand this in more detail, we look at the compute-communication breakdown for large and MLPerf configs as shown in Figure 6 and 7.

We instrumented the PyTorch source code to optionally perform blocking communication for allreduce and alltoall (shown in the “blocking” part of the graphs). The bars in “overlapping” part shows compute and exposed communication time as seen by application after overlap.

As we can see, when using the MPI-backend, not only communication time is higher than for the CCL-backend but even compute times grow significantly. We observed that almost all compute kernels were slowed down due to communication overlap. This was happening because the thread spawned for driving MPI communication was interfering with compute threads and slowing down both the compute and communication.

On the other hand, since CCL provides a mechanism to bind the communication threads to specific cores and exclude these cores from compute cores, we do not see compute slowdown issue but much better overlap of compute and communication with the CCL-backend.

BFLOAT16 training supported by oneCCL backend on Intel Xeon scalable processors

As a continuation to our CPU optimizations, we explored low precision DLRM training using BFLOAT16 data type that is supported on 3rd generation Intel Scaleable Xeon processors code-named Cooper Lake (CPX).

In contrast to the IEEE754-standardized 16-bit (FP16) variant, BFLOAT16 does not compromise at all on range when being compared to FP32. As a reminder, FP32 numbers have 8 bits of exponent and 24 bits of mantissa (one implicit). BFLOAT16 cuts 16 bits from the 24-bit FP32 mantissa to create a 16-bit floating point datatype.

For better support BFLOAT16 training, we also implemented a Split-SGD-BF16 solver which aims at efficiently exploiting the aforementioned aliasing of BF16 and FP32.

Therefore, it reduces the overhead and is equal to FP32 training, wrt. capacity requirements, as master weights are implicitly stored.

When we use distributed training, Split-SGD-BF16 allows us to compute gradients in BFLOAT16 and also perform communication in BFLOAT16 resulting in close to 2× reduction in communication time. The performance benefits were particularly visible for the MLPerf config on 2-sockets where scaling was primarily limited by all-to-all communication time. Besides BFLOAT16 speed-up, Fig. 8 also compares the CPX 4-socket node with the SKX 8-socket node. Here we observe that the CPX node is able to match the SKX node throughput for the Small config and outperforms the SKX node by ∼2× in case of the MLPerf config. This is due to the better balance of the machine: higher 20% memory bandwidth and 2× faster inter-socket communication.

Conclusion

To train moden models within hours, distributed training is a better option for those big models.

For distributed training, we introduce Intel® oneAPI Collective Communications Library and Torch-CCL with efficient implementations of collectives communication such as all-to-all.

We also presented how PyTorch with CCL backend can be used to significantly speed-up training AI topologies with great strong and weak scaling for various problems sizes, specifically the Deep Learning Recommender Models (DLRM) training which needs a balanced design between memory capacity, memory bandwidth, interconnect bandwidth and compute/floating point performance.

With CCL backend, we achieve up to 8.5x end-to-end speedup for the MLPerf config when running on 26 sockets and 5x to 6x speedup when increasing the number of sockets by x8 for the small and large configs.

On the other hands, MPI backend only achieve~6x end-to-end speedup for the MLPerf config when running on 26 sockets and ~4x speedup when increasing the number of sockets by x8 for the small and large configs

We also leverage the BFLOAT16 datatype which allows for up to 1.8× speed-up on latest CPUs (Intel Cooper Lake) while matching FP32 accuracy.

Reference

[1] Dhiraj Kalamkar , Jianping Chen Evangelos Georganas Sudarshan Srinivasan Mikhail Shiryaev Alexander Heinecke “Optimizing Deep Learning Recommender Systems Training on CPU Cluster Architectures”

[2] M. Naumov, D. Mudigere, H.-J. M. Shi, J. Huang, N. Sundaraman, J. Park, X. Wang, U. Gupta, C.-J. Wu, A. G. Azzolini, D. Dzhulgakov, A. Mallevich,I. Cherniavskii, Y. Lu, R. Krishnamoorthi, A. Yu, V. Y. Kondratenko, S. Pereira, X. Chen, W. Chen, V. Rao, B. Jia, L. Xiong, and M. Smelyanskiy, “Deep learning recommendation model for personalization and recommendation systems,” ArXiv, vol. abs/1906.00091, 2019.

[3] “Mlperf: Fair and useful benchmarks for measuring training and inference performance of ml hardware, software, and services.” [Online]. Available: http://www.mlperf.org/

[4] Maxim Naumov,Dheevatsa Mudigere, Whitney Zhao “DLRM workloads with implications on hardware and system platforms “ https://2020ocpvirtualsummit.sched.com/event/bXaO/dlrm-workloads-with-implications-on-hardware-and-system-platforms-presented-by-facebook